|

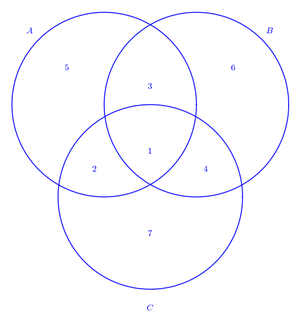

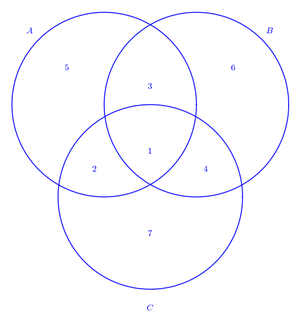

| Fig. 1: A Venn diagram used for error correction with hamming codes. |

When communication devices became more prevalent throughout the 20th century, a major fundamental question was left unanswered: how much information could be transmitted over a channel? The founder of information theory, Claude Shannon, published his seminal paper answering this question in 1948. [1] But before delving into the mathematics behind Shannon's paper, we will explore some basic questions that can be applied using information theory concepts.

Suppose two students (Harry and Sally) in a classroom pass messages back and forth in class. In order to write quick messages it is agreed upon that when Harry writes a "1" he means "what the teacher is saying is funny." A "0" implies what the teacher is saying is boring. Is there a way to quantify how much information Harry is passing to sally? Information theory indeed allows us to quantify this message. Take three examples: If Harry always writes a "1" to Sally, then Sally already knows what Harry has written before the message was even sent. No information has been sent. On the other hand, if Harry always writes a "0," no information is sent either. If Harry writes a "1" half the time and a "0" the other half, we can say he sent one bit of information. These three points indicate concavity as a function of the probability of receiving a "1." The randomness, uncertainty, or information contained in this message is defined as the entropy of the message.

From the previous example we can see that X is a discrete random variable - a "1" with some probability p(1) or a "0" with probability 1 - p(1). Now if Harry and Sally decide to expand their codebook to contain 10 different types of messages (the numbers 0-9), we can still calculate the expected amount of information in each message, so long as we know the probability that each message will get sent. Yet this explanation of entropy assumes each message sent is completely independent of previous messages sent. In practice this is often not the case. For example if the message passed is a series of letters forming a message, the probability of each letter occurring is strongly influenced by the previous characters in a message. A "u" is almost certainly going to come after a "q." If harry and sally are smart, they can add these patterns to their codebook. Rather than passing messages one by one, they can block messages the occur together with high frequency into one single message that has a lower entropy. This is the basis of Huffman and arithmetic coding, which is used to compress files on a computer. [2]

With information quantified, we now return to Shannon's paper. While a message might contain a certain amount of information, it may all be lost or distorted while it is being sent. Harry may write a sequence of "1's" and "0's", but there is no guarantee that the message will remain intact by the time it gets to Sally. He must pass the message to her through a bunch of classmates, all of whom might change the message. Is there a way to quantify the reliability, or capacity of this channel to send information? Shannon proposed a solution. The mutual information between two variables X and Y is defined as

If a message is sent with probability distribution X and a message is received with probability distribution Y then the mutual information describes the similarity between the two messages, how much of the message got through the channel. This may seem abstract, but in reality we deal with this problem all the time. When we speak on the phone are message gets distorted by noise if we do not have good reception, and often the person on the other end of the line cannot decipher what you are trying to say. The channel capacity is defined as the mutual information between the message sent and the message received. For the case of the gaussian channel (the noise in the call is gaussian and uncorrelated with earlier noise), the mutual information takes on a special form. If a gaussian input X is received at the output Y with some gaussian noise Z, then the capacity of the channel is given as

Where P is the variance of the input signal X, and N is the variance of the noise signal Z.

Before ending our discussion, I will throw in one twist to the problem of Harry passing messages to Sally. Suppose they have been successful passing messages for some time now, but Fred, who always receives Harry's message before passing it on to Sally, decides to play a game with them. Each time a message is passed (a set of bits), Fred changes 1 bit in the message. Harry, who is very careful about what he writes, does not like his messages being distorted and wants to come up with a way for Sally to retrieve the entire message intact. Harry tries to think of ways to add redundancy to his message so that if one bit gets changed she can still figure out the entire message with the other bits. Harry's question is, what is the best way to do this?

His first thought is to use 3 bits for every message bit that is sent. That way, Sally can take a simple majority of each of three bits to determine what Harry meant. A 111 → 1, a 110 → 1 and a 010 → 0. As long as Fred only changes one bit at a time, Harry can be sure that Sally will decode his message correctly. But this method is problematic, since Harry does not want his messages to be three times as long. So he comes up with a new coding technique which is often called a "hamming code." Harry will be able to have error correction for every 4 bits of information using just 3 parity (error correcting) bits.

Hamming codes, or in this case (7,4,3) hamming codes can be deciphered using the Venn diagram in Fig. 1. Harry places his information bits in locations 1-4 in the Venn diagram. [3] He then fills in the parity bits 5-7 by making sure there are an even number of bits in circles A, B, and C. He can then send four bits of information to Sally, for example 0100 101 who can fill in the bits on her own Venn diagram. She will immediately be able to figure out if Fred changed a bit because not all the circles will have an even number of "1's." Additionally, she will be able to pinpoint exactly which bit was changed, and correct it. Harry's a pretty smart guy! Not convinced this works? Try out the Venn diagram for yourself, creating a message, distorting one of the bits, and trying to reconstruct it. Similar coding strategies can correct for even more distorted bits. For example, CD players use Reed-Solomon codes that can detect bursts of up to 4000 errors. [2]

© Eric Johnston. The author grants permission to copy, distribute and display this work in unaltered form, with attribution to the author, for noncommercial purposes only. All other rights, including commercial rights, are reserved to the author.

[1] C. E. Shannon, "A Mathematical Theory of Communication," The Bell System Tech. J. 27, 379 (1948).

[2] T. A. Cover and J. A. Thomas, Elements of Information Theory, 2nd Ed. (Wiley-Interscience, 2006).

[3] R. W. Hamming, "Error Detecting and Error Correcting Codes," The Bell System Tech. J. 29, No. 2 (1950).